This is not yet another entry about RGB color calibration 🙂 This is about something, that is lurking deeper in the dark when you perform RGB filters astrophotography.

If you take a look at the sensitivity (QE – quantum efficiency) of the mono camera sensor, you will easily notice, that none of them is equally sensitive in the visual range of spectrum. When you image the night sky with RGB filters, you usually take an equal amount of each of the RGB channels. Then it is pretty easy to do proper colour balance on stacked RGB frames. This way you achieve the desired color in the final image – channels, where the camera is less sensitive (usually it is red) are amplified to match other channels. It has its price – the noise that has been recorded in this channel is also amplified and then it dominates in the picture. We cannot avoid this scenario when imaging with DSLR or color cameras. However, the root cause of this effect can be eliminated when images are shot with a monochromatic camera.

To achieve this, color channels need to be normalized, so for channels where the camera is less sensitive, more exposures need to be collected. There are two ways to get this. One is to analyze our camera QE curve. The second way is to calculate it using a G2V type star (with a B-V color index of about 0.65). These calculations or estimations do not need to be very accurate, we only want to limit noise in the less sensitive range of our camera.

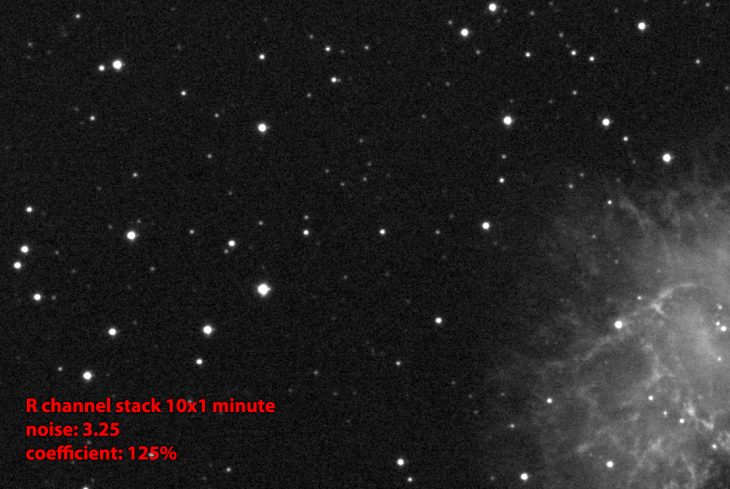

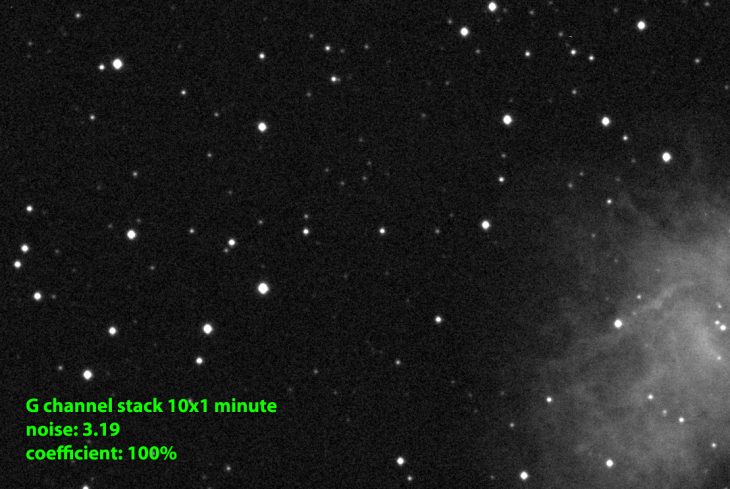

Below are three crops taken from 10 one-minute stacks made with R, G, and B filters under the suburban sky with a QHY163M camera. They were stacked using the Average method to limit advanced stacking algorithm effect on the measurements. Noise at each of these frames is at a similar level of 3ADU. But when the 0.65 color index star was measured at each frame it turned out, that the R channel needed to be amplified by 25%, and the B channel by 10% to make this star intensity equal in all channels. After such amplification noise will of course increase as well.

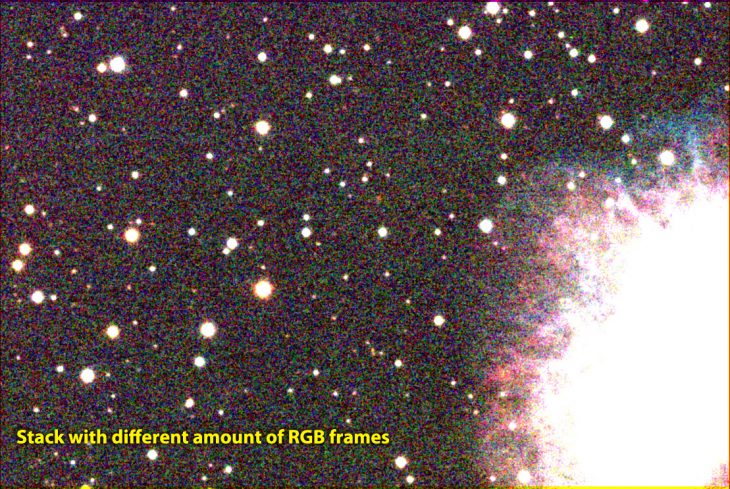

We can increase the amount of collected photons in two ways. The first is to expose the same amount of subframes for each channel but with different exposure times (for example 125s for R, 100s for G, and 115s for B channel). This way we will also achieve roughly balanced color in stacked images, but we will need additional calibration frames (darks) for these exposure times. Another way is to make different amounts of subframes with the same single exposure time – this is the way I have chosen. In the image below you can compare stacked and stretched RGB images made with equal amounts of RGB channels (first) and adjusted amount of RGB frames (second). You can see red noise dominant in the first image.

There are also other factors that will affect the noise amount in each channel. One of them is light pollution. In the areas where LP is significant, the noise amount in the red channel will be increased, so it would be good to collect even more frames with an R filter. Under my suburban sky, I just collect 50% more subframes with the R filter, than with the G and B filters.

There are also some RGB astrophotography filters already balanced for specific sensors, like ZWO LRGB Optimised Filters that are designed for the ASI1600 camera (QHY163M has the same sensor). RGB bandwidths are adjusted to compensate for camera sensitivity in each channel. When you use such filters, you do not need to differentiate the amount of frames in each of the RGB channels.

Clear skies!